The AI Layoff Trap: The Transition to an AI Economy May Be Even Scarier Than We Thought

Revisiting taxing AI firms to promote a Pareto efficient transition

As I’ve mentioned in the past, I’ve been a science fiction fan for decades. So I grew up with sentient computer antagonists like Colossus, Hal 9000, Skynet, and AM. All of which makes me instinctively suspicious of AI.

I’ve previously argued that we should not use Kaldor-Hicks efficiency to evaluate the transition to an AI economy. Instead, we should strive for Pareto efficiency.

In brief, as I argued, there will be winners and losers from the transition to an AI economy. Under Pareto efficiency, the winners should be taxed to compensate the losers (Kaldor-Hicks does not require compensation).

At least part of the problem with Kaldor-Hicks efficiency arises from the fact that it only requires a hypothetical redistribution. If the winners could compensate the losers, there is a new social gain and the change in question is efficient (at least in terms of social wealth maximization).

The solution is thus obvious: Force the winners to compensate the losers. Tax the winners a sufficient amount to compensate the losers and redistribute the proceeds to those who lose.

I became even more persuaded of the need for such a tax when I read a new paper, The AI Layoff Trap, by computer science professor Brett Hemenway Falk and information systems professor Gerry Tsoukalas.

Hemenway Falk, Brett and Tsoukalas, Gerry, The AI Layoff Trap (March 02, 2026). Available at SSRN: https://ssrn.com/abstract=6448898

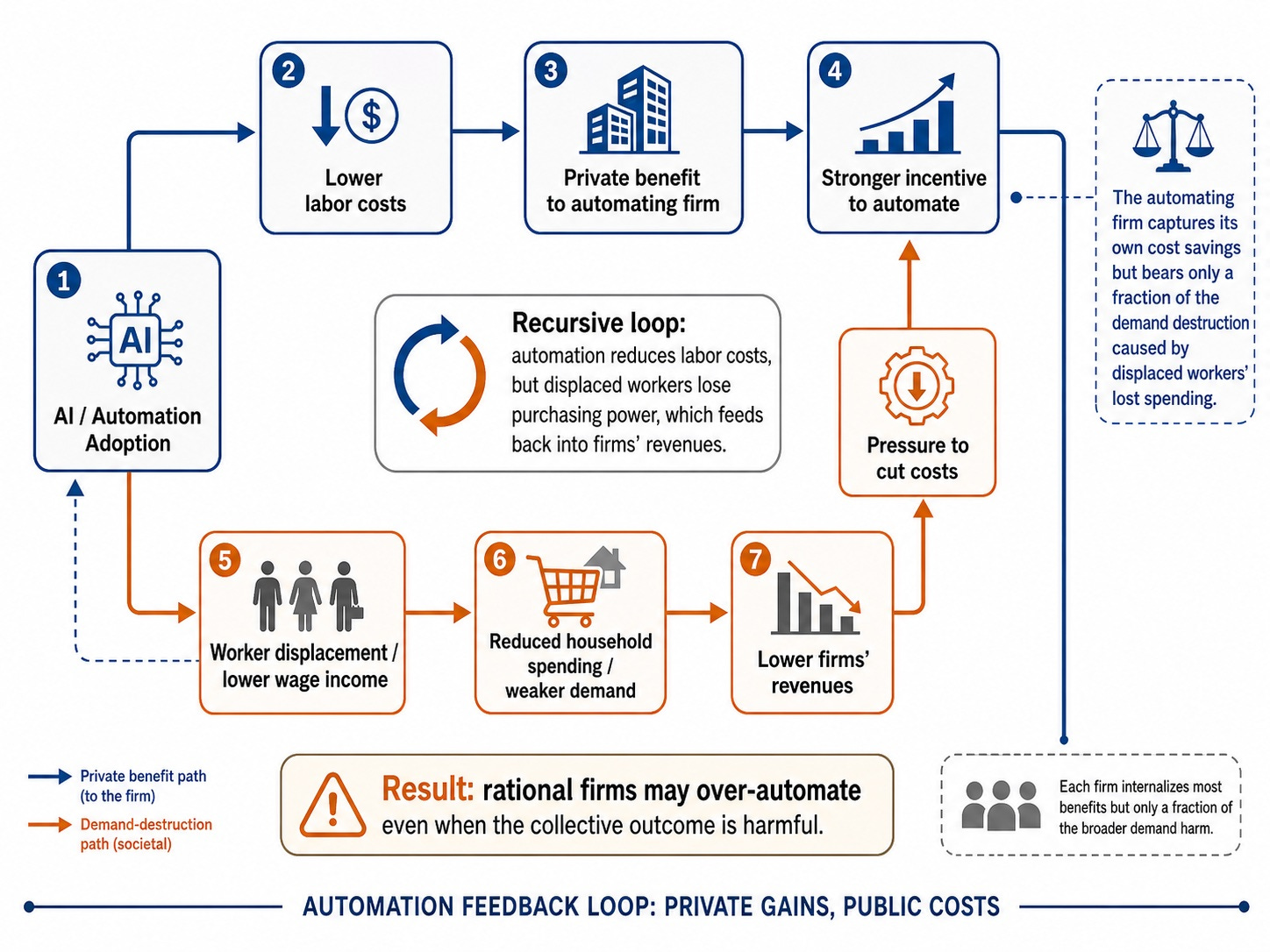

Falk and Tsoukalas argue that AI-driven automation will create a competitive trap. Each private sector company benefits from replacing workers with AI, but their privately optimal decision is socially harmful because the collective impact of all collectively those layoffs is to reduce consumers’ income. Most consumers, after all, are also workers. Unemployed or underemployed workers will have less to spend. Accordingly, consumer demand for all firms’ products will fall.

In their model, Falk and Tsoukalas make the simplifying assumption that each automated task displaces one worker. This simplifies the analysis but assumes a relatively direct substitution channel, rather than AI primarily augmenting workers, creating complementary tasks, or increasing labor demand elsewhere. If AI does the latter, the problem becomes much less concerning.

The model likewise assumes that layoffs are driven by AI replacing workers. If it is true, as some reports suggest, that layoffs taking place now are not the result of AI but instead firms are blaming/crediting AI for layoffs driven by other factors, the problem again becomes less concerning.

But let us assume that they are correct, at least in large part.

If so, AI will function as a demand externality. A negative externality occurs when a firm is able to offload a portion of its costs of producing goods and services onto society. Pollution is a classic example. Pollution harms society, but the producer does not bear the costs generated by its pollution and thus lacks an economic incentive to minimize pollution.

A demand externality occurs when an individual firm’s production or pricing decisions directly affect the revenue and profitability of other firms, usually due to aggregate demand effects. This often creates a positive feedback loop in which lower consumer demand reduces aggregate producer output, leading to clustered defaults or involuntary unemployment, creating self-fulfilling prophecies.

In this case, an automating firm captures its own cost savings but bears only a fraction of the demand destruction caused by displaced workers’ lost spending. As a result, rational firms may over-automate even when everyone can foresee that the outcome is collectively harmful. A recursive loop results: automation reduces labor costs, but displaced workers lose purchasing power, which feeds back into firms’ revenues.

Falk and Tsoukalas show that this distortion grows with the number of firms. Accordingly, in game theory terms, AI creates a Prisoner’s Dilemma. The outcome of which is that all firms automate even though all would be better off under collective restraint. In other words, even though companies may recognize this danger, they will be unable to avoid it because each individual firm has an incentive to automate before its rivals do.

Falk and Tsoukalas show that the resulting loss is not merely redistribution from workers to owners. Instead, over-automation can harm both workers and firm owners. As workers lose income, firms lose demand and therefore profits. This makes the problem a deadweight loss rather than simply a fairness or inequality issue.

The paper is highly mathematical. So much so that much of the paper is way above my pay grade. But the narrative argument makes a lot of intuitive sense. After all, it is a more sophisticated version of the argument I put forward in my earlier post.

So let us assume the authors are correct. What to do?

Falk and Tsoukalas contend that several popular responses—universal basic income, capital income taxation, worker equity participation, upskilling, and Coasian bargaining—may cushion losses but do not fully correct the externality. Accordingly, they propose a Pigouvian automation tax, set equal to the uninternalized reduction in demand per automated task.

A Pigouvian tax, named after 1920 British economist Arthur C. Pigou, is a tax on a market transaction that creates a negative externality, or an additional cost, borne by individuals not directly involved in the transaction. Examples include tobacco taxes, sugar taxes, and carbon taxes.

For instance, smoking in a public restaurant creates a negative externality because secondhand smoke can affect nonsmokers and worsen their long-term health outcomes. Drivers of gas-powered vehicles pay the gas tax to account for the externalities of pollution and wear and tear to the roads. Levying an excise tax in these situations can serve to recoup some of the cost of these externalities and “internalize” the cost of the externality to the purchase of the product. Sin taxes in general are also used to discourage consumption and “price-in” the externalities of products such as gambling, alcohol, and tobacco.

The Pigouvian concept of internalizing externalities in order to correct inefficient market outcomes suggests that the size of the excise tax should be equal to the cost of the negative externality. (LINK)

Unfortunately, implementing that solution poses multiple difficulties. Falk and Tsoukalas acknowledge one:

. . . the model is a closed-sector game, and a unilateral automation tax could push adoption offshore, strengthening the case for multilateral coordination or border-adjustment mechanisms analogous to those used in carbon policy.

In addition, however, the standard objections to Pigouvian taxes seem equally applicable here. How do you measure the size of the externality? How do you determine the effectiveness of the tax in reducing the demand externality? Can a single tax system take into account all the individual variations between locations, industries, firms, and individuals?

The paper assumes regulators can observe or approximate automation rates and impose a per-task tax equal to the uninternalized demand loss. This is conceptually clean but would be administratively demanding in reality, especially when AI augments some workers, replaces others, and changes task composition gradually.

Do those objections mean we should simply ignore the problem?

I think not. Recursive loops can lead to death spirals. Public policy likely cannot wait and see.